|

12/6/2023 0 Comments Airflow macros examplePairs will be considered as candidates of max partition.įield ( str) - the field to get the max value from. Only partitions matching all partition_key:partition_value The previous execution date as YYYY-MM-DD The Airflow engine passes a few variables by default that are accessible datetime ( 2022, 1, 1 ), schedule =, tags =, ) as dag : start = EmptyOperator ( task_id = "start", ) section_1 = SubDagOperator ( task_id = "section-1", subdag = subdag ( DAG_NAME, "section-1", dag. Here’s a basic example DAG: It defines four Tasks - A, B, C, and D - and dictates the order in which they have to run, and which tasks depend on what others.

Using (2) approach you should take a look on specific operator properties. A DAG (Directed Acyclic Graph) is the core concept of Airflow, collecting Tasks together, organized with dependencies and relationships to say how they should run. Using (1) approach variables can be passed via userdefinedmacros property on the DAG level. Defaults to """ get_ip = GetRequestOperator ( task_id = "get_ip", url = "" ) ( multiple_outputs = True ) def prepare_email ( raw_json : dict ) -> dict : external_ip = raw_json return, start_date = datetime. Macros in Apache Airflow are a set of pre-defined variables or functions that can be used to perform specific operations. There are 2 mechanisms for passing variables in Airflow: (1) Jinja templating. datetime ( 2021, 1, 1, tz = "UTC" ), catchup = False, tags =, ) def example_dag_decorator ( email : str = ): """ DAG to send server IP to email. Schedule interval put in place, the logical date is going to indicate the timeĪt which it marks the start of the data interval, where the DAG run’s startĭate would then be the logical date + scheduled ( schedule = None, start_date = pendulum. However, when the DAG is being automatically scheduled, with certain Logical is because of the abstract nature of it having multiple meanings,ĭepending on the context of the DAG run itself.įor example, if a DAG run is manually triggered by the user, its logical date would be theĭate and time of which the DAG run was triggered, and the value should be equal Additional custom macros can be added globally through ORM Extensions, or at a DAG level through the DAG.userdefinedmacros argument. Additional custom macros can be added globally through Plugins, or at a DAG level through the DAG.userdefinedmacros argument. Variables and macros can be used in templates (see the Jinja Templating section) The following come for free out of the box with Airflow. (formally known as execution date), which describes the intended time aĭAG run is scheduled or triggered. Variables and macros can be used in templates (see the Jinja Templating section) The following come for free out of the box with Airflow. Run’s start and end date, there is another date called logical date Just want to ask is there any way to insert the DAGNAME and DateTime.now value at run-time which was defined in the DAG file So the final result would be something like this 'Started 0dag1 on 22-Sept-2021 12:00:00'. I am trying to achieve a way to access dynamic values in Airflow Variables. This period describes the time when the DAG actually ‘ran.’ Aside from the DAG Access dynamic values in Airflow variables. apacheairflow airflowforbeginners airflow2 airflowvariables airflowmacrosIn this video, you'll learn what are airflow macros, why do we need them and h. Tasks specified inside a DAG are also instantiated intoĪ DAG run will have a start date when it starts, and end date when it ends. In much the same way a DAG instantiates into a DAG Run every time it’s run, Run will have one data interval covering a single day in that 3 month period,Īnd that data interval is all the tasks, operators and sensors inside the DAG Those DAG Runs will all have been started on the same actual day, but each DAG

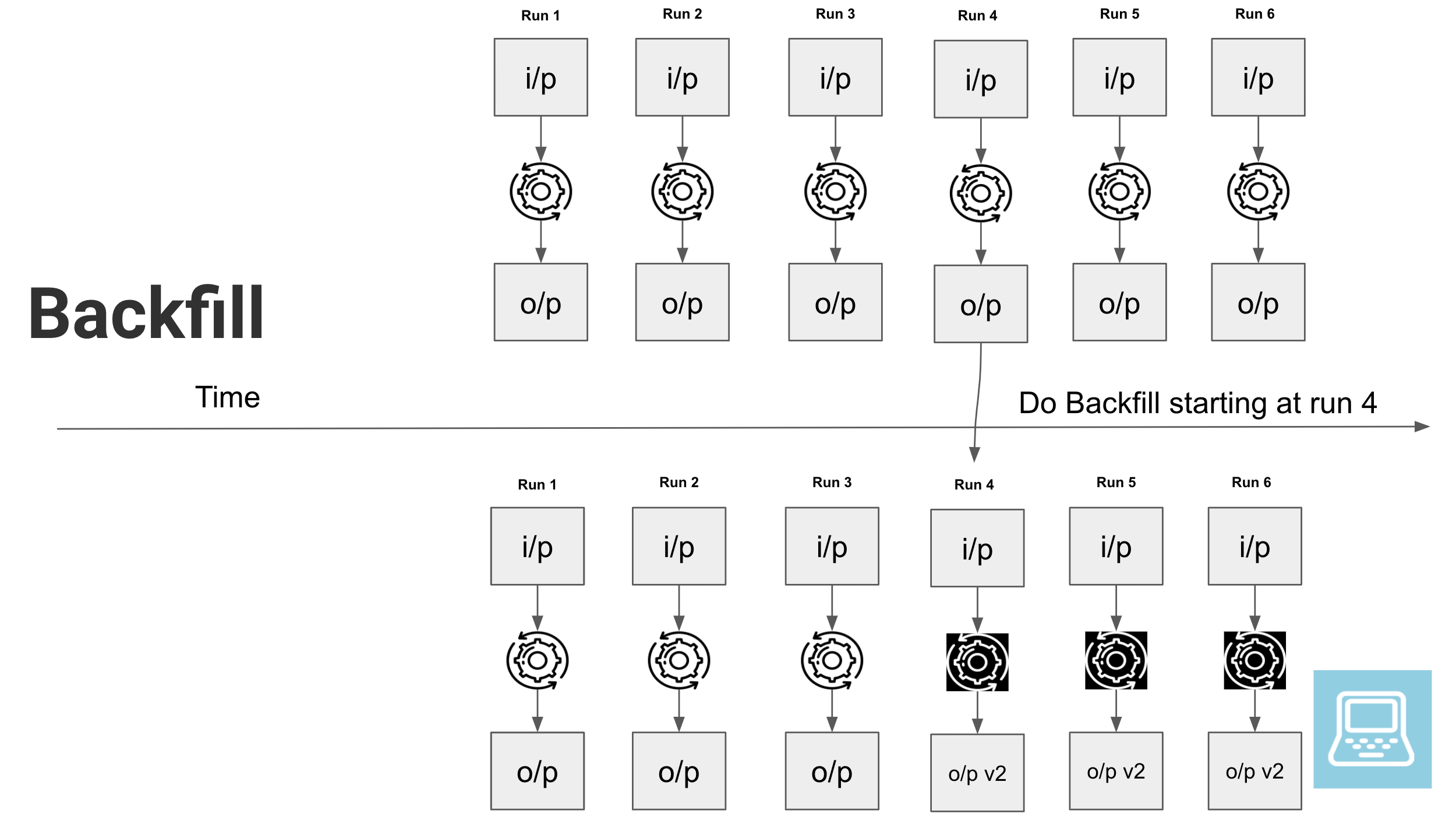

The previous 3 months of data-no problem, since Airflow can backfill the DAGĪnd run copies of it for every day in those previous 3 months, all at once. It’s been rewritten, and you want to run it on Same DAG, and each has a defined data interval, which identifies the period ofĪs an example of why this is useful, consider writing a DAG that processes aĭaily set of experimental data. For more examples DAGs see how others have used Airflow for GCP. If schedule is not enough to express the DAG’s schedule, see Timetables.įor more information on logical date, see Data Interval andĮvery time you run a DAG, you are creating a new instance of that DAG whichĪirflow calls a DAG Run. A lot of inspiration for these queries were taken from the examples shared by Felipe Hoffa for Hacker News and Github Archive. For more information on schedule values, see DAG Run.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed